- Blog

- The jackbox party pack 5 genres

- Become a lighthouse keeper

- Bee and puppycat

- Experiments er statistical calculations

- Destiny 2 crashing windows explorer cant alttab

- Resume maker io

- Suburbia game list of cities

- Discord nudes

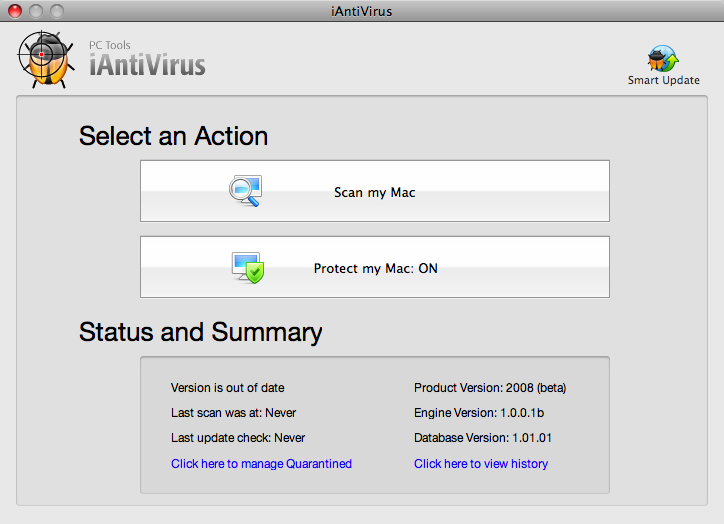

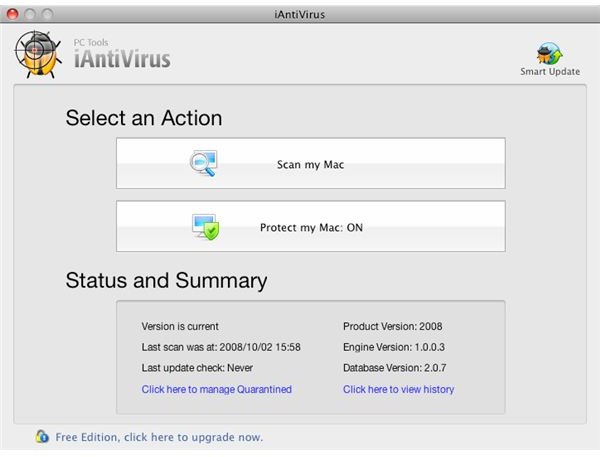

- Iantivirus mac

- Discovery plus -com

- Golf clash wind chart app

- Petrify definition

- Ethernet loopback testing

- #Iantivirus mac mac os x

- #Iantivirus mac zip file

- #Iantivirus mac manual

- #Iantivirus mac archive

- #Iantivirus mac software

Each anti-virus app had any real-time or on-access scanning disabled, to prevent premature detection of malware.

#Iantivirus mac zip file

zip file (to prevent accidental detection), which was then expanded into a folder full of samples. Malware was copied onto the system inside an encrypted.

#Iantivirus mac software

Testing took multiple days, but with the network connection cut off, the clock in the virtual system remained set to January 16, shortly after the anti-virus software was updated, and further background updates were not possible. The end result was a series of VMs, each containing a fully up-to-date anti-virus app, frozen at some time on January 16.Īfter that point, testing began.

Once installations were done, I ran each VM and updated the virus definitions in each anti-virus app (where possible), then saved another snapshot of this state and deleted the previous one. I installed each anti-virus app (engine) in that VM, saved a snapshot, reverted to the original base VM and repeated. A snapshot of this system was made, and then this VM was used as the basis for all testing.

#Iantivirus mac mac os x

I started with a virtual machine (VM) that consisted of a clean Mac OS X 10.9.1 installation, with Chrome and Firefox also installed. Testing was done in virtual machines in Parallels Desktop 2.951362. (Some will check inside such archives and some will not.) In a number of cases, I have not been able to obtain full copies of the malware, but included executable components and the like. All items were removed from their archives, and the archives were discarded, in order to put all anti-virus engines on a level playing field.

#Iantivirus mac archive

dmg archive on VirusTotal, but such samples were not included in that form. In many cases, such a sample will be found within a. Where possible, the full, original malware was included in testing. The SHA1 checksum of each sample is included in the data, to allow those with access to VirusTotal to download the samples used and replicate my tests. Any samples that were not already present in the VirusTotal database were uploaded to VirusTotal, so that the samples would be available to the anti-virus community. A group of 188 samples, from 39 different malware families, was used for testing. Testing methodology was mostly the same as last year. A 98% and a 97%, or a 60% and a 59%, should be considered identical, for all intents and purposes. It’s important to understand that such a variation is not significant. Some of the software that was tested varied from each other, or from last year’s testing, by only a couple percentage points. It is also important to understand small variations in the numbers. The success of an app in this testing should not be taken as endorsement of that app, and in fact, some apps that performed well appear to have anecdotal problems that frequently appear in online forums. In a way, this test is merely a probe to see what items are included in the database of signatures recognized by each anti-virus app. It makes no attempt to quantify the performance or stability of the various anti-virus apps, or to compare feature sets, or to identify how well an anti-virus app would block an active infection attempt.

#Iantivirus mac manual

This test is a measure only of the detection of specific malware samples when performing a manual scan. ScopeĪs with last year, it’s important to understand the scope of this testing. Multiple samples of each of nine new malicious programs, which did not exist at the time of last year’s testing, were included. Some samples were removed, in an attempt to remove any that might have been deemed questionable, while others were added.

The malware samples used also went through a change. Four new apps were added, while two were removed from testing (one simply because it was redundant). This year’s testing sees a change in some of the apps being tested. I was very curious about whether these programs were still as effective (or ineffective) as they had been, and how well they detected new malware that had appeared since the last test was performed.Īfter last year’s testing, I received a number of requests for tests of other apps. Because this is an area of software that is in almost constant flux, I felt it was important to repeat that test this year. If you are looking for advice about what anti-virus software to use, you would be better served by reading my Mac Malware Guide.Īlmost exactly one year ago, I completed a round of tests of 20 different anti-virus programs on the Mac.

Feel free to read on to see the results of the testing, but please read the entire article, and don’t just skip ahead to the results. For this reason, I will not be repeating these tests. Update: Many people have completely ignored some of the cautionary information mentioned in the Scope section, and have erroneously assumed that the anti-virus apps at the top of the test results are the best to use overall. January 27th, 2014 at 8:49 AM EST, modified